You need to write a custom Azure Policy that denies any Azure OpenAI resource without a private endpoint. Simple enough requirement. An hour later, you’re still hunting for the right resource provider alias, your JSON nesting is wrong, and the policy ARM rejects on every test run. Azure Policy authoring is a legitimate skill, and the schema is not forgiving. Azure OpenAI can produce a production-ready draft in 30 seconds. That’s not a replacement for understanding what the policy does—it’s a reason to stop wasting time on syntax archaeology.

Azure Policy is the enforcement layer for the entire Privacy-First Blueprint built across this series. Every control—Private Link, Managed Identity, and Zero Data Retention—needs a corresponding policy to prevent configuration drift. In 2026, we are moving toward “Agentic Governance,” where AI agents shift compliance left, auditing Bicep and Terraform templates for gaps before they ever reach the Azure Resource Manager (ARM).

1. AI-Assisted Azure Policy Authoring

Authoring a policy definition requires the correct Policy Alias. To disable public access to Azure OpenAI, for example, you must target the Microsoft.CognitiveServices/accounts/publicNetworkAccess alias. AI models trained on Azure documentation are good at this specific mapping—better than a grep through the ARM provider reference.

Few-Shot Policy Drafting

For the highest accuracy from Azure OpenAI, use a Few-Shot Prompt. Provide the model with correct examples of different policy effects (e.g., Audit, Deny, Modify) to establish the structural baseline.

# Example: Prompt for GPT-4o

"Requirement: Deny any Azure OpenAI resource that does not have its public network access set to 'Disabled'.

Target Alias: Microsoft.CognitiveServices/accounts/publicNetworkAccess.

Use 'Indexed' mode and include parameters for the allowed effect."

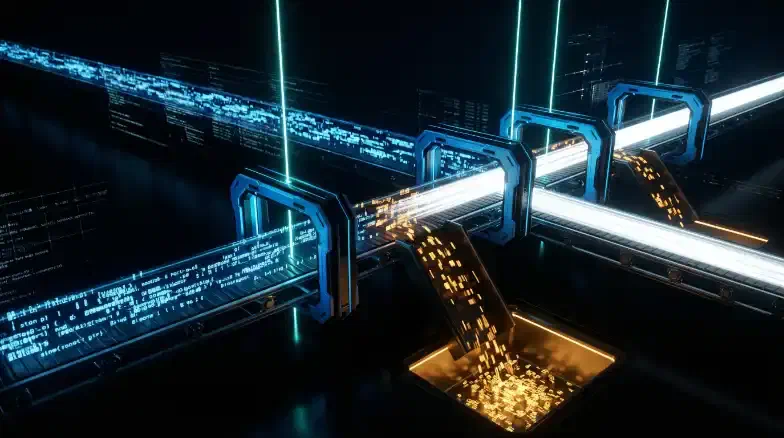

2. The Policy-as-Code Pipeline

Policies should be managed with the same rigor as application code. A Policy-as-Code repository ensures that every change is versioned, peer-reviewed, and tested before being applied at the Management Group level.

The Policy-as-Code CI/CD Loop

CI/CD Validation

In your GitHub Actions workflow, use the az policy definition create command in a temporary scope to validate the JSON schema and rule logic. ARM will reject any definition that uses an invalid alias or incorrect nesting—which is exactly the kind of fast feedback you want before a policy reaches a Management Group.

# GitHub Actions: Validate Policy Definition

- name: Validate Policy JSON

run: |

# Requires a Service Principal with 'Resource Policy Contributor'

az policy definition create \

--name "validate-openai-policy" \

--rules "./policies/deny-openai-public-access/policy.json" \

--mode Indexed \

--only-show-errors

az policy definition delete --name "validate-openai-policy"

3. Shift-Left: Auditing IaC for Security Drift

Azure Policy is typically reactive—it catches a resource after deployment. Shift-Left Auditing uses AI to scan Bicep and Terraform files in the Pull Request, identifying compliance gaps before they trigger a policy denial.

Shift-Left AI Audit Flow

By auditing the semantic intent of the code, AI can catch workarounds—like a developer adding a parameter that defaults to an insecure value—that traditional scanners miss.

4. Explaining Violations in Plain Language

The most frustrating part of compliance is seeing an error like FieldValueMismatch on Microsoft.CognitiveServices/accounts/publicNetworkAccess. You know something is wrong. You don’t know what to do about it.

AI Remediation Guides

Pass the policyState record to Azure OpenAI to generate a fix guide in plain language. The translation from compliance error to actionable instruction takes seconds.

Before: FieldValueMismatch: publicNetworkAccess

After (AI-Generated): “Your Azure OpenAI resource ‘ai-prod-01’ is non-compliant. The ‘Privacy-First Blueprint’ requires that public network access be Disabled. To fix this, update line 12 of your Bicep file to set publicNetworkAccess: 'Disabled' or run the following Azure CLI command: az cognitiveservices account update ... --public-network-access Disabled.”

This is where AI earns its place in a compliance workflow. Policy authoring is a one-time cost; violation triage happens every sprint.

5. Microsoft Defender for Cloud Integration

In 2026, Microsoft Defender for Cloud (MDC) acts as the central aggregator for AI compliance. Use the Custom Recommendations feature to create findings based on Azure Resource Graph (KQL) queries.

// ARG Query: Find OpenAI resources with public access

resources

| where type == "microsoft.cognitiveservices/accounts"

| where properties.publicNetworkAccess != "Disabled"

| project id, name, subscriptionId

Key Takeaways

- AI Authoring is Safer: AI handles the syntax; you review the logic.

- Shift-Left with Semantic Audit: Use LLMs to scan IaC code in PRs to catch gaps before they reach production.

- Audit Mode First: Always assign a new policy in

Auditmode for 48 hours to measure impact before switching toDeny. - Policy is the Contract: Your Azure Policy repository is the executable contract for your Landing Zone’s security posture.

The Privacy-First AI DevOps Blueprint is now complete. By integrating these eight layers—from Private Link to Continuous Compliance—you have built a zero-trust environment where AI is a secure productivity multiplier.