You built a CI/CD tool that sends code diffs to Azure OpenAI for automated review comments. It works great. Three months later, a security audit lands on your desk. The diffs have been routinely sending database connection strings, AWS access keys, and internal IP addresses—verbatim—to the LLM. The pipeline never had a gate. Direct application-to-model calls bypass every opportunity for centralized inspection, sanitization, or audit logging. You aren’t the first engineer this has happened to.

Azure API Management (APIM), positioned as an AI Gateway, is that gate. It transforms Azure OpenAI from a collection of scattered, unmanaged endpoints into a governed platform. In 2026, APIM has evolved into a native GenAI Gateway, providing a unified control plane to enforce token-based rate limiting, redact PII at the network layer, and load balance across multiple regional deployments.

1. APIM as the AI Gateway

Without a gateway, every application team manages its own authentication, rate limiting, and logging. The platform has no visibility into aggregate AI usage or cost. APIM introduces a single path for all AI traffic. Policies applied at the gateway apply to every consumer—from local dev scripts to production CI/CD pipelines—without changing application code.

Choosing the Right Tier

For a production AI Gateway, you must use the Standard v2 or Premium tiers. These tiers provide the VNet integration required to communicate with the Private Endpoints of your Azure OpenAI resources. The Consumption tier is strictly ineligible as it lacks the networking capabilities to reach isolated backends.

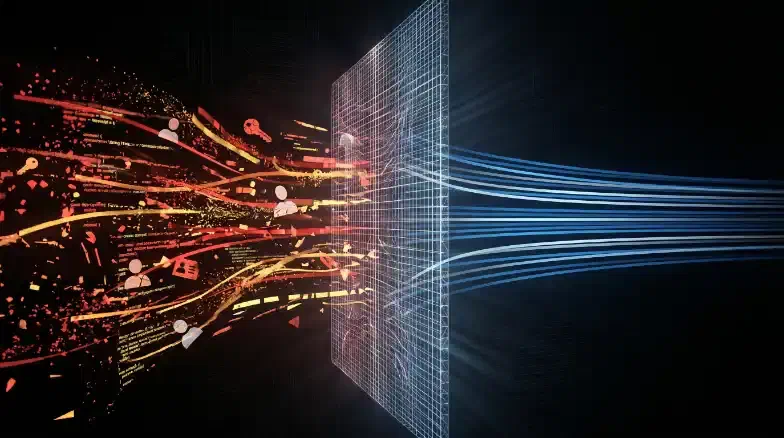

The Unified AI Gateway Architecture

Every request travels through an inbound policy chain before it reaches the model, and every response passes through an outbound chain before it reaches the caller. That’s the architecture. The rest of this post is filling in those boxes.

2. Backend Configuration: Private OpenAI Endpoints

The first step is configuring APIM to use your private Azure OpenAI instances as backends. In 2026, we use the Backend Pool and Circuit Breaker features to handle high-availability and regional failover natively.

Implementing Circuit Breakers

A single OpenAI deployment has a fixed Tokens Per Minute (TPM) quota. To prevent outages during peak CI/CD runs, pool multiple regional deployments. The circuit breaker monitors for 429 Too Many Requests responses and automatically shifts traffic to a healthy region.

// Backend with native Circuit Breaker for Azure OpenAI

resource openAiBackend 'Microsoft.ApiManagement/service/backends@2023-09-01-preview' = {

name: '${apimName}/openai-eastus'

properties: {

url: 'https://openai-eastus.openai.azure.com/openai'

protocol: 'http'

circuitBreaker: {

rules: [

{

failureCondition: {

count: 1

interval: 'PT10S'

statusCodeRanges: [{ min: 429, max: 429 }]

}

tripDuration: 'PT10S'

acceptRetryAfter: true // Critical for honoring OpenAI retry windows

}

]

}

}

}

Regional Circuit Breaker Logic

Keyless Authentication

Never share OpenAI API keys with application teams. Instead, enable APIM’s System-Assigned Managed Identity and grant it the Cognitive Services OpenAI User role on your backends. APIM acquires the Entra ID token and injects it into the request header automatically.

<inbound>

<authentication-managed-identity resource="https://cognitiveservices.azure.com" />

<set-backend-service backend-id="openai-pool" />

</inbound>

3. Inbound Policy Chain: Prompt Sanitization

The primary value of the gateway is enforcing what reaches the LLM. Redacting a secret at the inbound layer is worth ten outbound filters; if the model never sees the secret, it cannot be leaked in a completion or stored in Microsoft’s logs.

PII and Secret Redaction

For fast, efficient sanitization, use regex patterns in your inbound policy to redact SSNs, emails, and common credential formats (e.g., AKIA for AWS keys or AccountKey= for Azure Storage).

<inbound>

<!-- Redact potential SSN and Email patterns -->

<set-body>@{

string body = context.Request.Body.As<string>(preserveContent: true);

body = Regex.Replace(body, @"\d{3}-\d{2}-\d{4}", "[SSN_REDACTED]");

body = Regex.Replace(body, @"[a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-zA-Z]{2,}", "[EMAIL_REDACTED]");

return body;

}</set-body>

</inbound>

For higher accuracy, use the send-request policy to call the Azure AI Language PII detection endpoint before forwarding the prompt to OpenAI. This adds ~200ms of latency—a reasonable trade-off for SOC 2 or HIPAA environments where regex alone isn’t sufficient.

4. Token Budget Enforcement

In 2026, we move from request-based rate limiting to Token-Based Rate Limiting. The llm-token-limit policy tracks actual consumption (prompt + completion) and enforces a Tokens Per Minute (TPM) quota per team or application.

<inbound>

<!-- Enforce a 50,000 TPM quota per subscription -->

<llm-token-limit

counter-key="@(context.Subscription.Id)"

tokens-per-minute="50000"

estimate-prompt-tokens="true" />

</inbound>

Setting estimate-prompt-tokens="true" allows APIM to reject massive, runaway prompts before they reach the backend, preserving your model’s quota for other users.

5. Outbound Policy Chain: Logging and Audit

One of the greatest risks of AI adoption is the absence of an audit trail. APIM lets you log AI traffic to a workspace you own, satisfying compliance requirements without relying on Microsoft’s default retention.

Logging Metadata, Not Content

The APIM audit log should record metadata: who called which model, which deployment was used, and exactly how many tokens were consumed. Avoid logging the raw prompt text unless required by a specific regulatory framework—the logs themselves become a sensitive data store if you do.

<outbound>

<!-- Log token usage to your own Event Hub -->

<log-to-eventhub logger-id="ai-audit-logger">

@{

return new JObject(

new JProperty("timestamp", DateTime.UtcNow),

new JProperty("subscriptionId", context.Subscription.Id),

new JProperty("deployment", context.Request.MatchedParameters["deployment-id"]),

new JProperty("totalTokens", context.Response.Body.As<JObject>(true)["usage"]["total_tokens"])

).ToString();

}

</log-to-eventhub>

</outbound>

6. Semantic Caching

For knowledge bases, FAQ bots, and common DevOps queries, up to 40% of prompts are semantically similar. Semantic Caching (a 2025/2026 feature) uses vector embeddings to identify similar prompts and serve cached responses from an Azure Managed Redis instance.

This reduces both token costs and latency. Disable it for code generation or creative tasks where response variance matters. Always partition the cache by <vary-by>@(context.Subscription.Id)</vary-by> to ensure User A never retrieves a cached response intended for User B’s private data.

Key Takeaways

- Centralize the Control Plane: APIM is the only way to manage aggregate AI usage, cost, and security across multiple teams.

- Redact at the Inbound Layer: Prevention beats detection. Sanitize prompts before they reach the model.

- TPM is the New RPM: Use token-based rate limiting (

llm-token-limit) to prevent quota exhaustion in shared environments. - Own the Audit Trail: Log token usage and metadata to your own Log Analytics workspace via Event Hub.

- Natively Scale: Use Backend Pools and Circuit Breakers to handle failover across regional OpenAI deployments without complex XML logic.

Next Steps:

- Read [Cluster Post 2] to see how APIM audit logging complements Zero Data Retention (ZDR).

- Read [Cluster Post 7] to implement AI-driven secrets scanning in your CI/CD pipelines to catch credentials before they reach the gateway.

- Return to the [Pillar Post] to see how the AI Gateway fits into the full six-layer blueprint.